Terraform

Obviously after listing next areas of study in previous post, I would pick up something else entirely – Terraform. Back in James Turnbull land with this marvellous book. I had been looking at Ansible but was put off by all the Vagrant stuff which seemed too anti-container to me.

I have had some crunchy issues to work through. Frustrating at times but I have learnt a hell of a lot working through them – as ever learning a lot of newbie stuff on top.

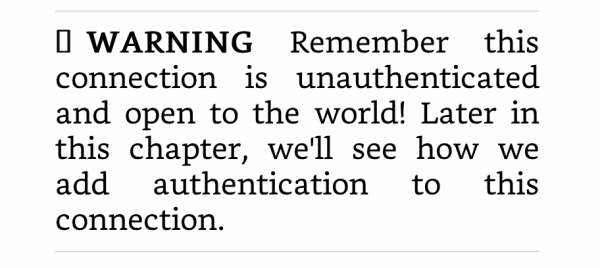

One such lesson was what happens if you check your AWS access keys into a public Github repo. Turns out you get the attention of AWS and to a lesser extent Github pretty damn quick. Very impressive response particularly as I had no idea what I had done it initially.

As well as another lesson in just how easily an idiot can introduce a vulnerability, I had to figure out how to do the following to get back onto an even keel:

- rotate IAM access keys

- remove commits from a public repo (admittedly not really necessary once step above had been completed)

Big hugs to AWS for their response to this issue.

As the Terraform tutorial makes extensive use of Git, this has also been a great way to reinforce my Git skills.

I have realised I am in a space where I have learnt enough to be bold but not enough to avoid doing dangerous things. As with my Docker API faux pas, I am grateful I am doing this on my personal AWS account. Like Luke Skywalker in Empire Strikes Back taking on Vader before finishing his training. Handy (haha) but ultimately doomed.

Weekly Webinar

Not been great at sticking to this goal, but a colleague referred me to this – particularly relevant to recent work challenges.

Back on SRE

Reading-wise, same colleague recommended the SRE book as a capacity modelling resource. He didn’t know I had a copy. Perfect opportunity to jump forward a few chapters to read about Intent Based Planning.

Black Friday

When trying to find Ansible learning materials that didn’t use Vagrant, I came across Udemy who had some Black Friday deals. I have purchased Kubernetes, Ansible and Python courses.

My Picks

Bladerunner 2049. Just because. I probably love it for all the reasons others don’t.

Curb Your Enthusiasm. Makes Mondays worthwhile. Will miss it when it is done.

Noel Gallagher – Who Built the Moon?. I adore this. And I am not a slavish follower of all things Oasis either. I took my copy of Standing on the Shoulders of Giants back to the shop on the grounds it was pants.